A Supervisor Cluster that is configured with the vSphere networking stack also does not support the Harbor Registry, because the service is only used with vSphere Pods.Ī vSphere Namespace created on a cluster that is configured with the vSphere networking stack also does not support running vSphere Pods, but only Tanzu Kubernetes clusters.Kubernetes is an open-source platform used to orchestrate and manage containers and containerised applications. Therefore, the Spherelet component is not available in such Supervisor Cluster and Kubernetes pods run inside Tanzu Kubernetes clusters only. The cluster also supports the vSphere Network Service and the Storage Service.Ī Supervisor Cluster that is configured with the vSphere networking stack does not support vSphere Pods.

Supervisor Cluster Configured with the vSphere Networking StackĪ Supervisor Cluster that is configured with the vSphere networking stack only supports running Tanzu Kubernetes clusters created by using the Tanzu Kubernetes Grid Service. Tanzu Kubernetes clusters the same way and by using the same tools as you would with standard Kubernetes clusters.įigure 4. You can invoke the Tanzu Kubernetes Grid Service API declaratively by using kubectl and a YAML definition. In the context of vSphere with Tanzu, you can use the Tanzu Kubernetes Grid Service to provision Tanzu Kubernetes clusters on the Supervisor Cluster.

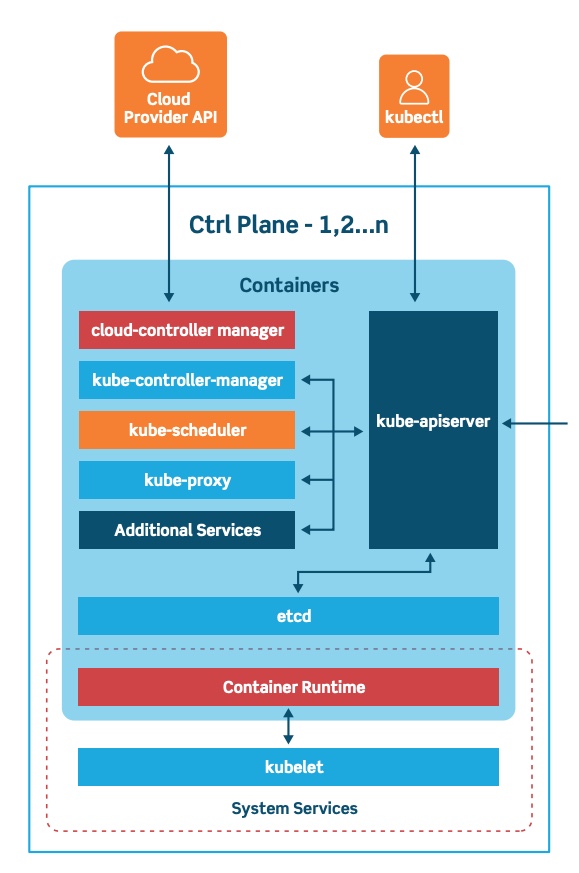

#What is kubernetes control plane full#

Tanzu Kubernetes ClustersĪ Tanzu Kubernetes cluster is a full distribution of the open-source Kubernetes software that is packaged, signed, and supported by VMware. To provide access to namespaces to DevOps engineer, as a vSphere administrator you assign permission to users or user groups available within an identity source that is associated with vCenter Single Sign-On.Īfter a namespace is created and configured with resource and object limits as well as with permissions and storage policies, as a DevOps engineer you can access the namespace to run Kubernetes workloads and create Tanzu Kubernetes clusters by using the Tanzu Kubernetes Grid Service. Storage limitations are represented as storage quotas in Kubernetes. A resource pool is created per each namespace in vSphere. As a vSphere administrator, you can set limits for CPU, memory, storage, as well as the number of Kubernetes objects that can run within the namespace. When initially created, the namespace has unlimited resources within the Supervisor Cluster. The Virtual Machine Service module is responsible for deploying and running stand-alone VMs and VMs that make up Tanzu Kubernetes clusters.Ī vSphere Namespace sets the resource boundaries where vSphere Pods and Tanzu Kubernetes clusters created by using the Tanzu Kubernetes Grid Service can run.

It is a kubelet that is ported natively to ESXi and allows the ESXi host to become part of the Kubernetes cluster. An additional process called Spherelet is created on each host. When as a DevOps engineer you schedule a vSphere Pod, the request goes through the regular Kubernetes workflow then to DRS, which makes the final placement decision. vSphere DRS is also integrated with the Kubernetes Scheduler on the control plane VMs, so that DRS determines the placement of vSphere Pods.

vSphere DRS determines the exact placement of the control plane VMs on the ESXi hosts and migrates them when needed. Additionally, a floating IP address is assigned to one of the VMs. The three control plane VMs are load balanced as each one of them has its own IP address. Three Kubernetes control plane VMs in total are created on the hosts that are part of the Supervisor Cluster.